Manual Testing is NOT Dead — It’s Becoming Elite (Part 2)

Why Automation Will Never Be Enough

Automation is powerful, and AI is moving faster than most teams are ready for - so it's worth asking honestly:

- if machines can test at scale, what's left for humans to do?

Because it challenges a belief many people have already accepted.

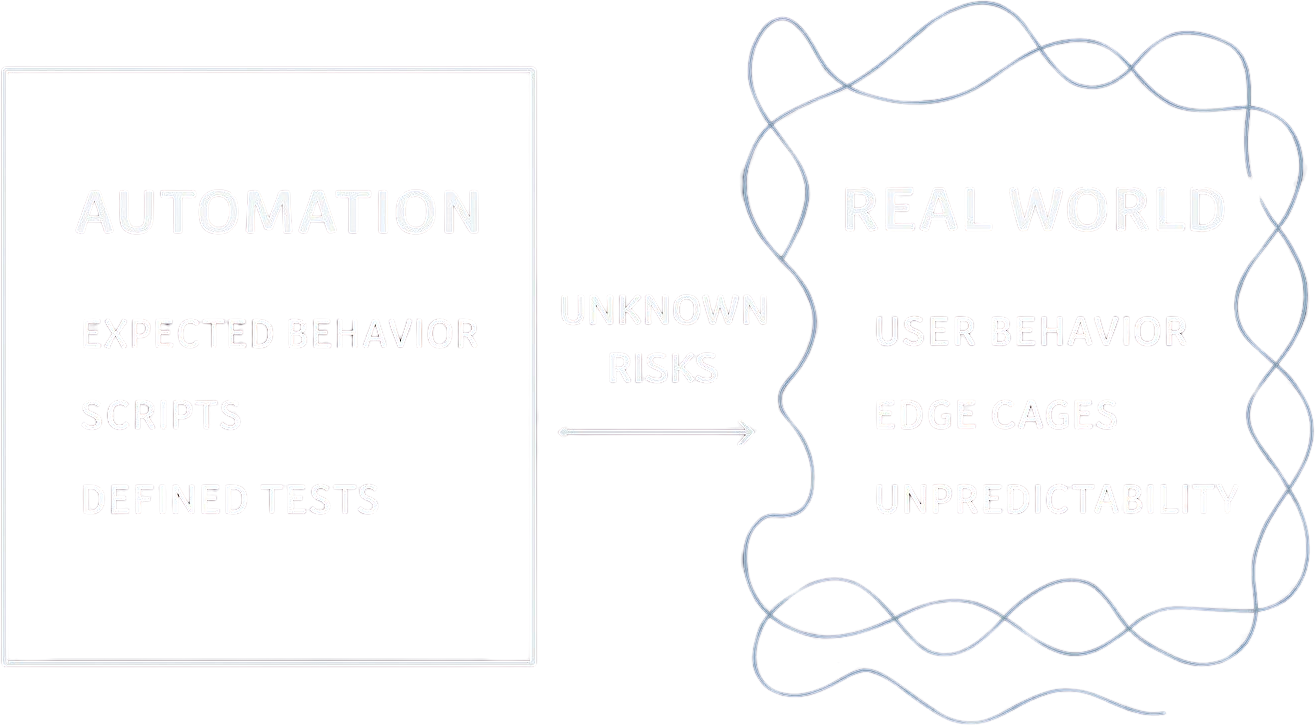

Automation Works Inside Boundaries

Automation is incredibly efficient.

It:

Executes predefined steps

Validates expected outcomes

Runs thousands of checks in minutes

But all of this happens within one constraint:

👉 It only operates within boundaries you define.

And here’s the problem:

Real-world systems don’t fail inside boundaries.

They fail outside them.

What Automation Can't Ask?

Automation answers one question extremely well:

✔ Did the system behave as expected?

But it cannot ask:

❌ Was the expectation correct in the first place?

No tool closes that - it requires someone who can question the assumption before the test is ever written

And no framework, tool, or AI model can fully close it.

Where the Real Problems Actually Live?

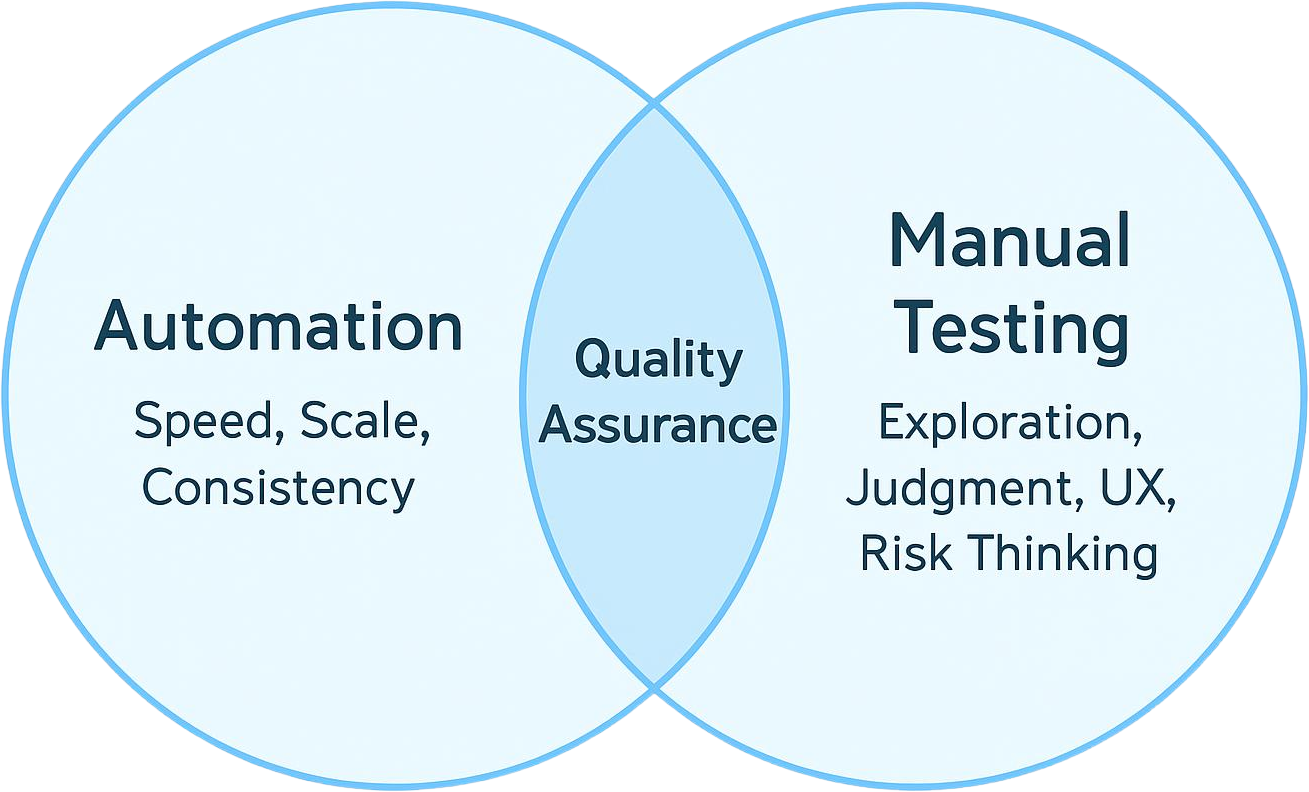

Automation tests correctness.

Manual testing explores experience + risk.

That gap is where most real problems live.

Because users don’t think in terms of:

“Expected vs actual result”

They think in terms of:

👉 “Does this make sense?”

👉 “Why is this confusing?”

👉 “Can I trust this product?”

And those questions don’t return a boolean result.

A Quiet Failure Scenario

Imagine this:

Your automation suite passes 100%.

Payments work

APIs respond correctly

UI elements function as expected

Everything is green.

But users were abandoning the checkout flow at the pricing step - not because it was broken, but because the copy created hesitation nobody had thought to test for”

Why?

Pricing feels unclear

Flow creates hesitation

Something feels… off

No test failed.

But the product did.

👉 If this resonates, don’t miss future breakdowns like this.

Where Manual Testers Still Win

Even in highly automated systems, manual testers outperform in key areas:

1. Ambiguity

Requirements are rarely perfect.

Humans interpret. Automation executes.

2. Exploratory Thinking

Unexpected flows. Strange inputs. Real-world chaos.

3. UX Judgment

“Feels wrong” cannot be asserted in code.

4. Risk Anticipation

Experienced testers see patterns before failure happens.

And What About AI?

AI changes the game.

It can:

Generate test cases

Suggest edge scenarios

Analyze logs faster than humans

But here’s what AI still depends on:

👉 Data

👉 Context

👉 Problem framing

If those are flawed…

AI doesn’t fix the problem.

It scales the flaw.

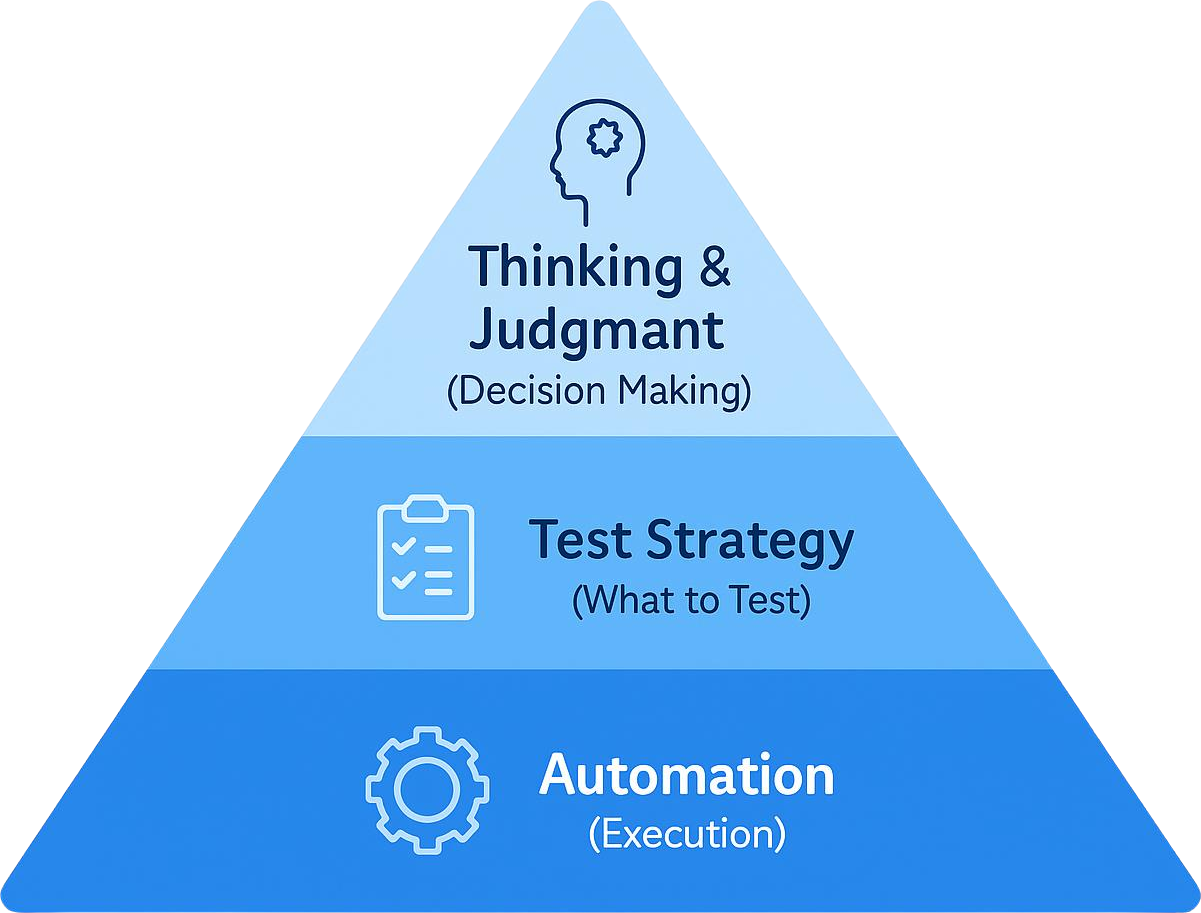

The More Automation Grows, The More Thinking Matters

The more automation grows…

The more valuable thinking becomes.

Because someone still needs to:

Decide what to automate

Interpret results beyond pass/fail

Identify what’s missing, not just what’s covered

Automation increases speed.

But thinking defines direction.

Final Thought

Automation is not the enemy of manual testing.

It’s an amplifier.

But amplification without direction is dangerous.

Because you don’t just scale what’s right.

You scale what you assume is right.

👉 Want to stay ahead in the AI + testing era?