I Built an AI-Assisted Release Confidence Engine

Instead of a Pass/Fail Gate

After building that test failure explainer I wrote about last week, something became really obvious.

Failures aren’t the real decision point.

Shipping is.

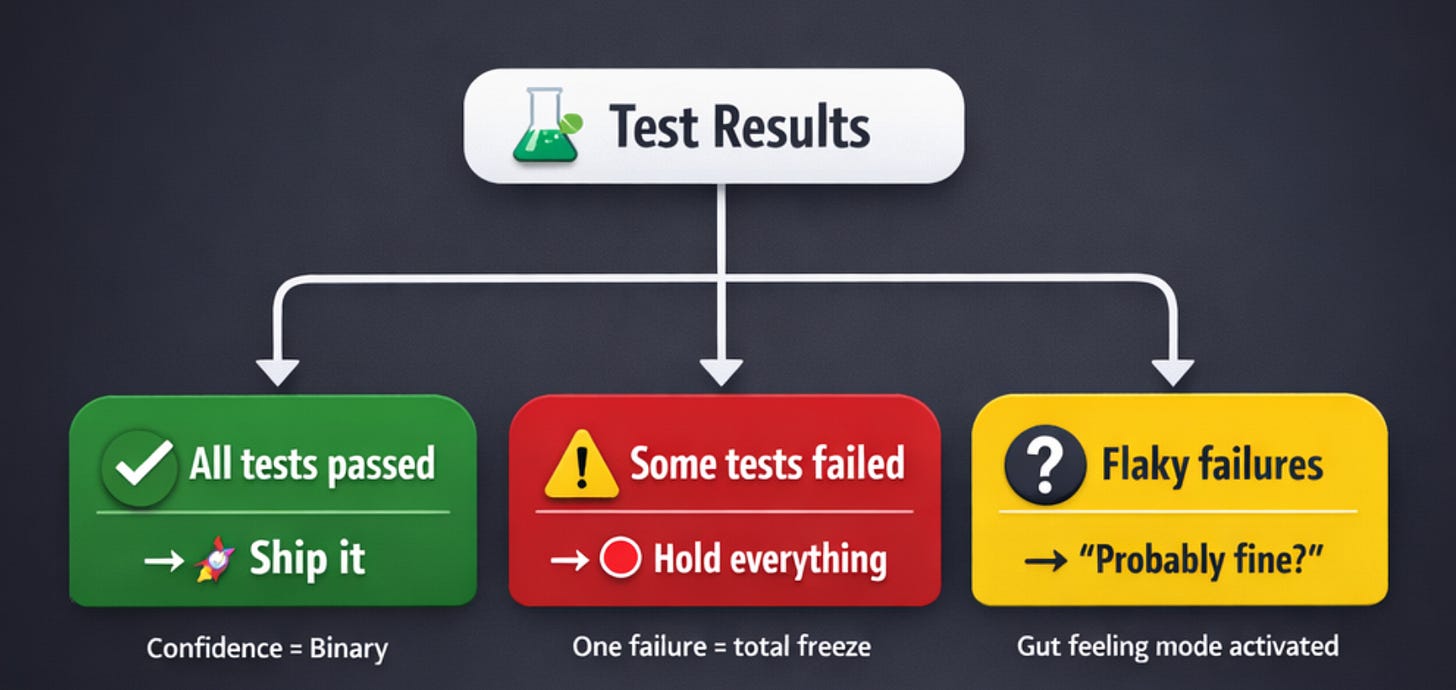

Most release decisions still look like this:

All tests passed → Ship it

Some tests failed → Hold everything

Flaky failures → “Probably fine?”

That’s not quality engineering. That’s checkbox engineering.

So I built something slightly more ambitious: a system that converts test signals into a structured release confidence score.

Not automation of approval. Not replacing QA sign-off. Just structured reasoning at release time.

Here’s what happened.

The Real Problem (It’s Not What You Think)

CI pipelines are noise machines.

A release decision actually depends on a bunch of signals:

How many failures and what type

Historical flakiness patterns

Which components are impacted

The risk profile of this specific release

But these signals live in completely different places. Test reports. Jira tickets. Git diffs. Random Slack threads at 4 PM on Friday.

Humans manually synthesize all of this under time pressure.

That’s the actual bottleneck.

After 14 years of watching release meetings, I can tell you: the problem isn’t that we don’t have enough data. It’s that we have too much, and no structured way to think about it.

What I Explicitly Did NOT Build

This matters more than what I built.

I avoided:

Auto-blocking releases

Auto-approving builds

Touching the pipeline itself

The system produces a structured “confidence narrative.” That’s it.

Humans still make the call.

Why? Because I’ve seen what happens when automation starts making release decisions without context. It becomes this thing that everyone routes around. You end up with more problems than you started with.

The Inputs (Nothing Magical)

For each release candidate, I aggregated:

Total tests run

Failure categories (using my earlier failure explainer)

Flaky test history

Code change size

Impacted services

Recent defect trends

Just structured signals. Nothing you don’t already have.

The Key Design Choice That Changed Everything

Instead of asking “Is this release safe?”

I reframed it:

What are the risk drivers?

What failure types increased compared to baseline?

Are failures clustered in critical paths?

Is this regression pattern consistent with the change size?

That framing changed everything.

Here’s what I learned: LLMs are actually pretty good at structured synthesis. They’re terrible at binary judgment.

So I stopped asking it to judge and started asking it to synthesize.

How the System Actually Works

The workflow is straightforward:

Aggregate structured release data

Normalize it (same principle as the failure explainer—clean inputs matter)

Send it to the LLM with very specific constraints:

Highlight risk clusters

Compare against historical baseline

Avoid absolute statements

Explicitly state uncertainty

The output format:

Risk factors identified

Stability signals

Areas requiring human review

Suggested confidence band (High / Moderate / Low)

Not a verdict. A reasoning summary.

The kind of thing that would take a human 20 minutes to compile, but the LLM does in seconds.

What Surprised Me

Small releases sometimes got flagged as low confidence.

Large releases sometimes scored higher.

This felt wrong at first. Surely bigger changes = more risk?

But then I looked closer:

Change size doesn’t equal risk

Failure type matters way more than failure count

Clustering predicts instability better than totals

A 5-line config change that breaks auth is riskier than a 500-line refactor in a well-tested util library.

This completely changed how I think about regression signals.

What Broke (Learn From My Mistakes)

The first version over-weighted failure count.

It would punish releases for:

Known flaky tests that nobody cares about

Low-impact modules that don’t affect users

So I introduced:

Historical flakiness discounting

Service criticality weighting

Regression vs known issue differentiation

The system improved immediately.

Not because I changed the model. Because I changed the framing.

Same lesson as the failure explainer: clean inputs and good prompts beat smart models.

Where This Actually Helps

This system is useful for:

QE leads making decisions under time pressure

Engineering managers reviewing release health

Cross-team alignment discussions

Avoiding those emotional “ship vs don’t ship” debates at 5 PM

It creates structured conversation instead of gut feelings and arguments.

That’s valuable.

What This Is NOT

Let me be very clear.

This is not:

A replacement for QA

A safety certification

A risk oracle

It is: a reasoning layer over noisy test systems.

It’s the same philosophy as the failure explainer. It doesn’t make decisions. It helps humans make better decisions, faster.

The Pattern I Keep Seeing

Both the failure explainer and this confidence engine follow the same principle:

Don’t automate judgment. Automate synthesis.

The judgment still needs human context. But the synthesis—pulling together scattered signals, normalizing data, identifying patterns—that’s where AI actually shines.

Every time I’ve tried to push AI further into the “decision” territory, it breaks down. Trust erodes. People route around it.

But when I keep it in the “助理” territory—assistant, not replacement—it works.

If I Were Scaling This

At 10× usage, I’d add:

Release confidence trends over time

Prediction accuracy tracking (did low-confidence releases actually have issues?)

Team-specific risk tolerance calibration

Integration with incident management systems

At 100×, this becomes a release intelligence platform that learns from outcomes and gets better at synthesis.

But I’d never let it auto-approve or auto-block. That line is important.

Final Thought

Look, I’ve been in enough release meetings to know that most of the stress comes from uncertainty, not risk.

“Should we ship this?” is a hard question when you’re staring at 15 test failures, 3 known flaky tests, a code change that touches 12 files, and a deployment window that closes in 2 hours.

This system doesn’t eliminate risk. It eliminates confusion.

And honestly? That’s more valuable than any “smart” automation that tries to make the call for you.